Background

Last year, eBay Ads launched a new campaign type, Promoted Listings Express (PLX), which lets eBay sellers boost visibility for their auction-style listings with just a few clicks and a single, flat, upfront fee. Over the past year, our research team worked to optimize how we merchandise these promoted auction items. The way in which we recommend these items is a multi-sided customer challenge which presents opportunities to surface amazing auction inventory to eBay buyers, as well as help sellers to boost visibility on their auction listings.

Ranking Iterations

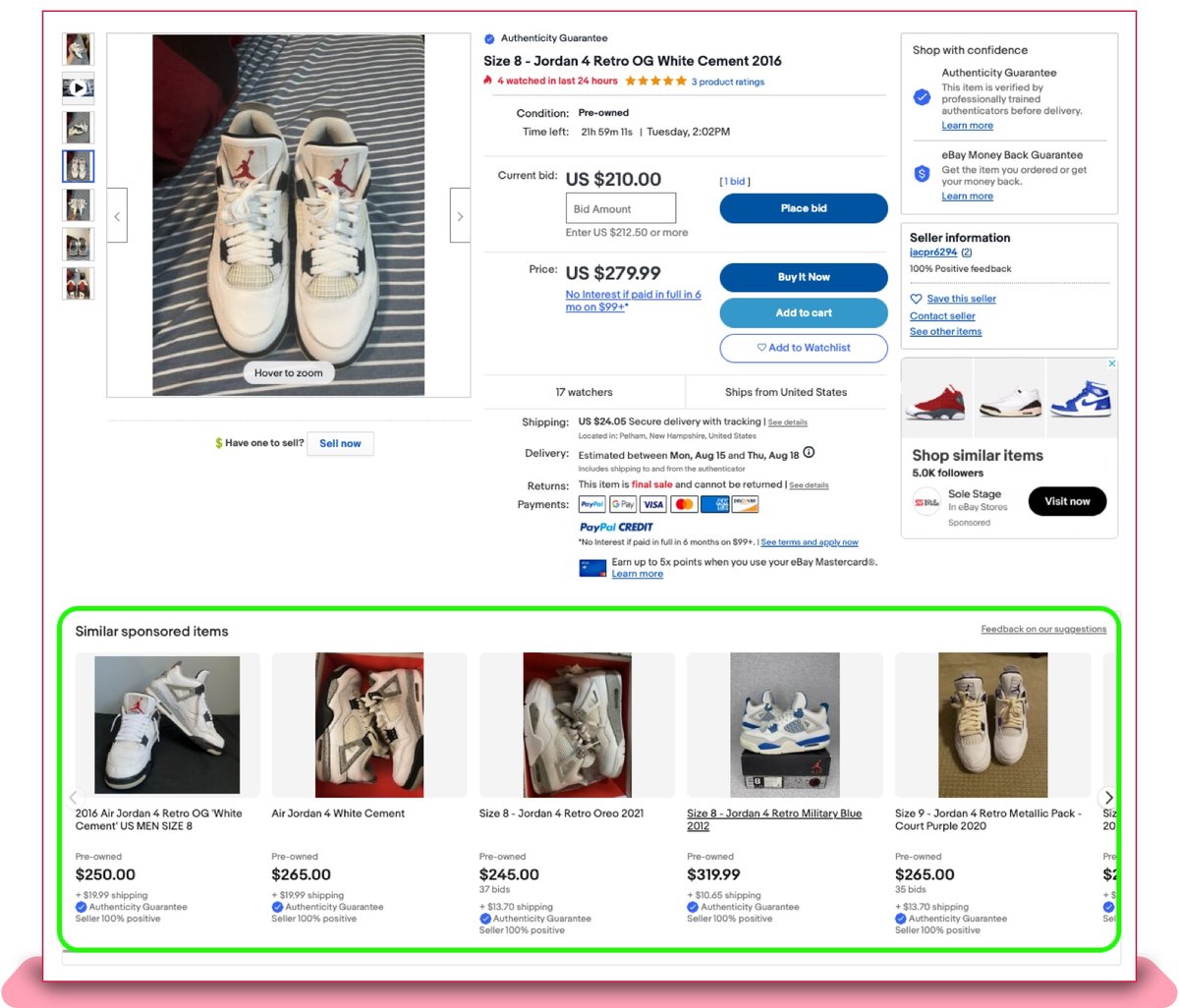

Promoted auction items currently surface on a number of fixed slots in the Similar Sponsored Item recommendations, which are similar items with respect to the main item on the eBay View Item page. This main item is also known as the “seed” item. An example recommendation set is highlighted below:

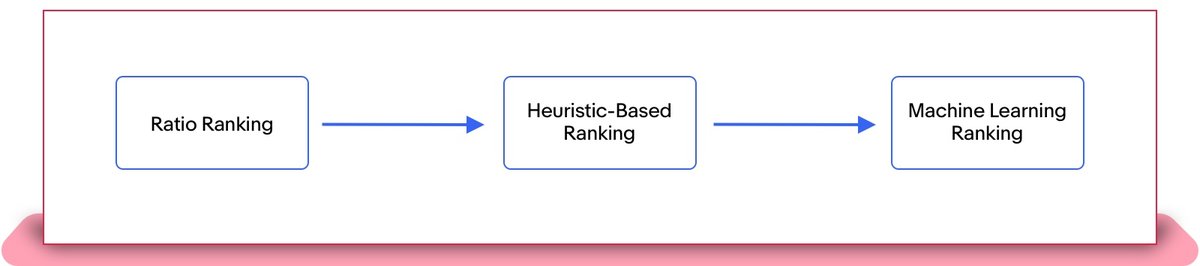

To determine which PLX listings are shown in these fixed slots, we use ranking techniques that assign a score to the listings on the basis of multiple relevance and revenue parameters. There were three sequential iterations to improve the item ranking methodology:

Ratio Ranking

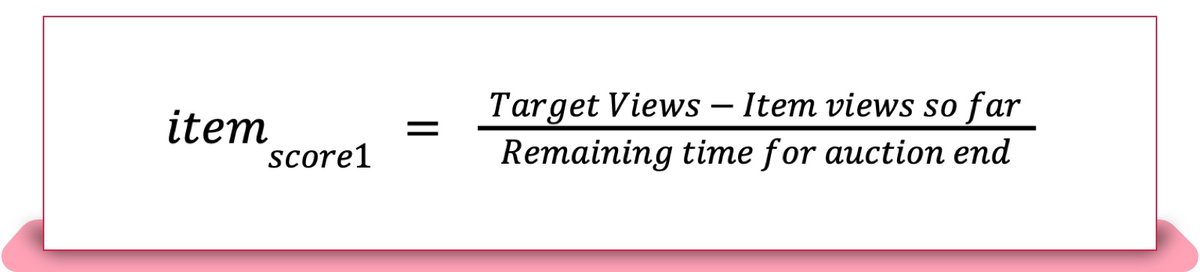

The first iteration of ranking PLX items prioritizes achieving seller listing view targets during the auction period. In this approach, PLX items for the allocated slots of Similar Sponsored Item recommendations were selected using a weighted random scheme. The weights for the random selection, represented below as itemscore1, are equal to the number of remaining views to satisfy the target threshold divided by the time left in the auction.

Target Views are defined by the eBay Analytics team based on the number of additional daily views required for promoted auction items to have higher conversion and more perceived value to sellers as compared to organic auction items. Remaining time for auction end is measured in seconds; subsequently itemscore1 values of all PLX items are normalized to lie between 0 and 1.

Overall, the ratio ranking technique prioritizes showing the item in urgent need of views to meet view targets and ensures views are distributed across items evenly through the weighted random selection.

Heuristic-Based Ranking

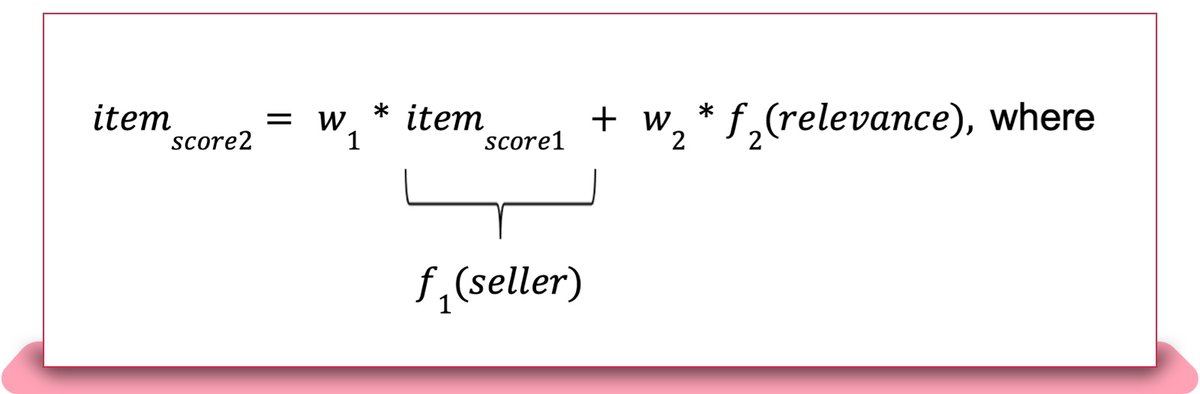

The above ratio-based approach posed a challenge to recommendation relevance. In the subsequent heuristic-based approach, we aimed to improve the value of those views for sellers by driving higher buyer engagement, as outlined in the equation below.

f1(seller)accounts for the required visibility boost to the view count of an item and is equal to itemscore1. f2(relevance) which is a linear weighted sum between 0 and 1 calculated using the auction item’s relevance features and is defined below.

Here, title and price similarity are the similarity scores of the auction item’s title string and price to the seed item’s title string and price. a1, a2 and a3 are weights of f2(relevance) which are manually chosen.

During offline analysis, we experimented with multiple values for w1, w2, a1, a2 and a3. We set two criteria to select the best weight values from offline analysis for the A/B test.

-

Increase in relevance of items shown to buyer

-

Increase in views to whole promoted auction inventory

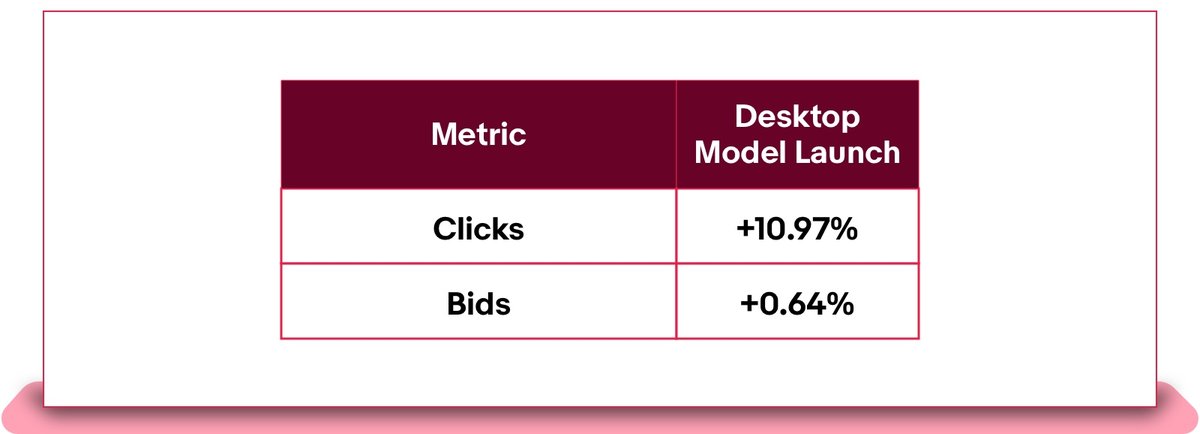

To evaluate (1), we leveraged click through rate (CTR), price similarity and title string similarity to seed item. For (2), we evaluated view count distribution and coverage over one week for promoted auction inventory. The weight values that fulfilled both criteria were selected for the online A/B test. Below are the results from the A/B test for desktop users against the ratio-based ranking approach as the control, which indicate that the revised ranking score increased buyer engagement for promoted auction items due to the increase in recommendation relevance.

Despite the improved performance, this approach has a few clear drawbacks. First, the weights within f2(relevance) components are set manually to keep the score value interpretable rather than optimized algorithmically. Additionally, f2(relevance) only considers a handful of features to capture all the relevance characteristics, as manually determining the weights for all features would be a tedious task. Lastly, itemscore2 tries to optimize both seller and buyer targets simultaneously, which assumes the distribution of both components in the score is similar; this assumption is not always true as new listings don’t have historical CTR data.

Machine Learning Ranking

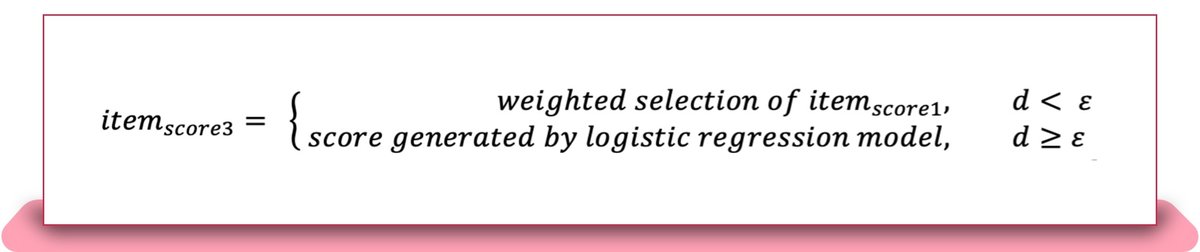

The current approach leverages machine learning to provide an even better match between the seed item being viewed and the PLX items we recommend. This approach is motivated from an epsilon greedy algorithm, which generates a random decimal between 0 and 1 for every online inference. If the decimal d is greater than ε, a logistic regression model is used to generate the item score, whereas if the decimal is less than ε, the item score is equal to itemscore1.

We experimented with multiple values of ε in offline analysis in order to select the optimal threshold for online A/B testing. To find the optimal value, we evaluated view count distribution, percentage of promoted auction items getting non zero impression count (coverage) over one week and uplift in CTR on randomly collected unbiased data. = 0.05 resulted in best results in all three evaluation criteria.

The logistic regression model is trained on historical user behavior data to predict the most probable auction item to receive a user click, while itemscore1 prioritizes seller performance to meet the item view target. Hence, determines when to prioritize buyer relevance or seller performance, but both objectives are optimized separately, unlike the second approach, in which both components are baked into a single heuristic. Thus, with ε = 0.05, 95% of recommendations will be based on logistic regression model results, while 5% of recommendations will be reserved for listings that require additional impressions in order to satisfy the target count.

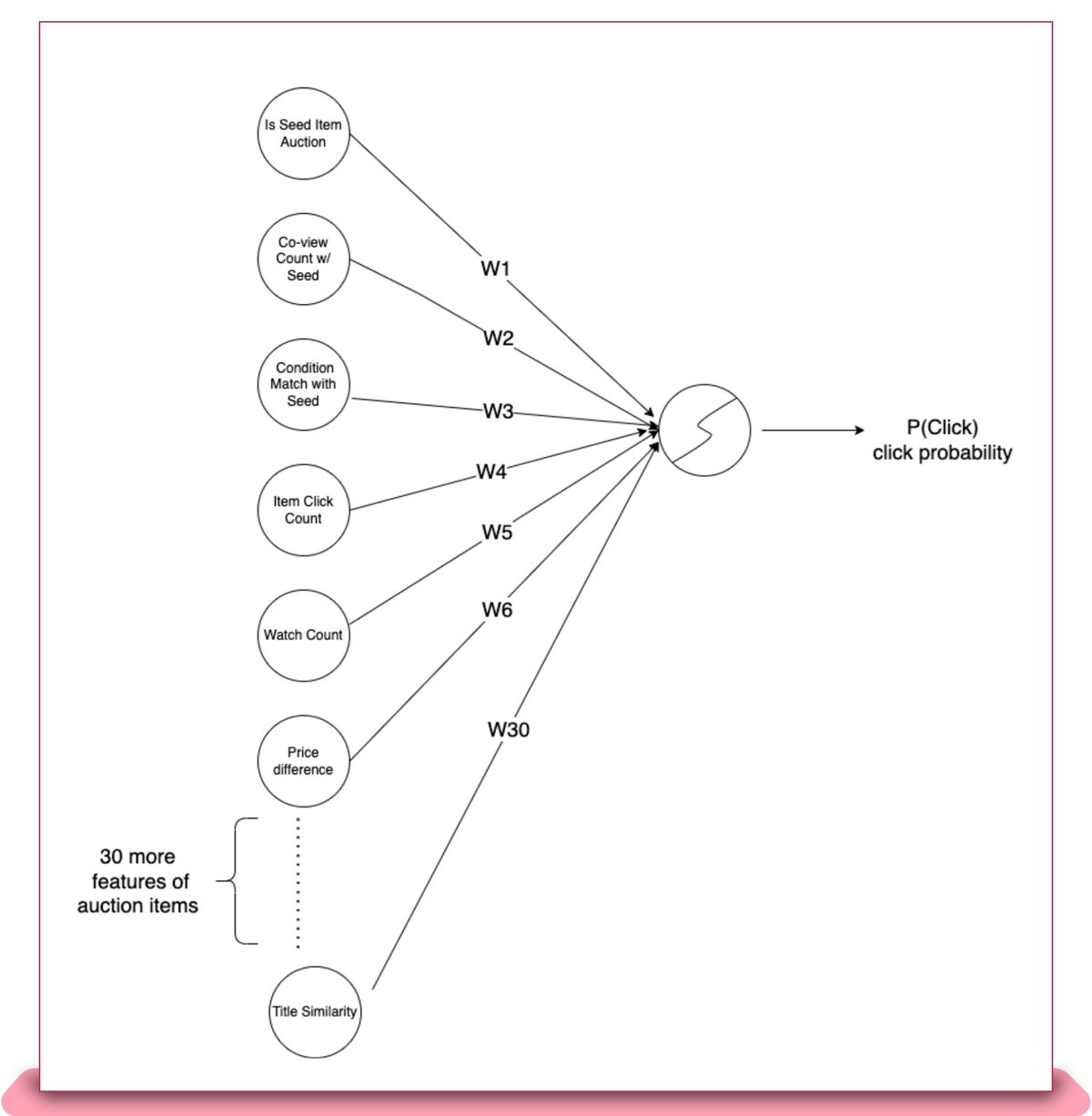

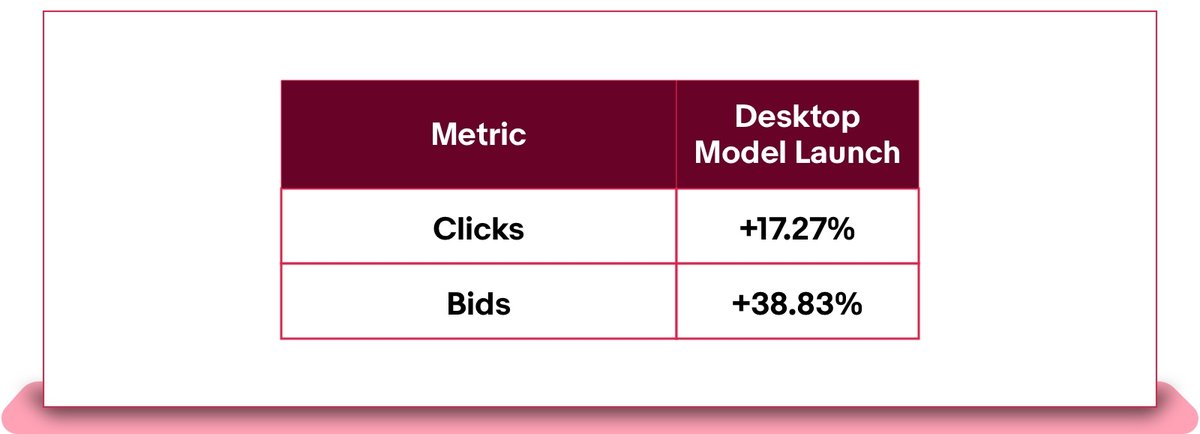

The machine learning model (displayed above) uses more than 30 features that characterize recommendation quality, user preference and seed-to-item similarity. The model was trained on roughly two weeks of click log data for promoted auction items. For this initial iteration of machine learning ranking, we chose a linear model like logistic regression due to ease of deployment to the production environment and to account for limited data as the inventory for PLX continues to ramp up. This machine-learning-based approach was A/B tested on desktop against the heuristic-based ranking approach as the control; results can be seen below, which indicate a strong uplift in buyer engagement:

As the inventory of promoted auction items expands, we will have enough data to try nonlinear machine learning models that better capture the feature interactions. Thus, the next step is to leverage boosting trees, which have proven best to model structured data.

Semih Bezci and Manas Rai also contributed to this article.