This is the first part of a three-part series about age verification in the European Union. In this blog post, we give an overview of the political debate around age verification and explore the age verification proposal introduced by the European Commission, based on digital identities. Part two takes a closer look at the European Commission’s age verification app, and part three explores measures to keep all users safe that do not require age checks.

As governments across the world pass laws to “keep children safe online,” more times than not, notions of safety rest on the ability of platforms, websites, and online entities being able to discern users by age. This legislative trend has also arrived in the European Union, where online child safety is becoming one of the issues that will define European tech policy for years to come.

Like many policymakers elsewhere, European regulators are increasingly focused on a range of online harms they believe are associated with online platforms, such as compulsive design and the effects of social media consumption on children’s and teenagers’ mental health. Many of these concerns lack robust scientific evidence; studies have drawn a far more complex and nuanced picture about how social media and young people’s mental health interact. Still, calls for mandatory age verification have become as ubiquitous as they have become trendy. Heads of state in France and Denmark have recently called for banning under 15 year olds from social media Europe-wide, while Germany, Greece and Spain are working on their own age verification pilots.

EFF has been fighting age verification mandates because they undermine the free expression rights of adults and young people alike, create new barriers to internet access, and put at risk all internet users’ privacy, anonymity, and security. We do not think that requiring service providers to verify users’ age is the right approach to protecting people online.

Policy makers frame age verification as a necessary tool to prevent children from accessing content deemed unsuitable, to be able to design online services appropriate for children and teenagers, and to enable minors to participate online in age appropriate ways. Rarely is it acknowledged that age verification undermines the privacy and free expression rights of all users, routinely blocks access to resources that can be life saving, and undermines the development of media literacy. Rare, too, are critical conversations about the specific rights of young users: The UN Convention on the Rights of the Child clearly expresses that minors have rights to freedom of expression and access to information online, as well as the right to privacy. These rights are reflected in the European Charter of Fundamental Rights, which establishes the rights to privacy, data protection and free expression for all European citizens, including children. These rights would be steamrolled by age verification requirements. And rarer still are policy discussions of ways to improve these rights for young people.

Implicitly Mandatory Age Verification

Currently, there is no legal obligation to verify users’ age in the EU. However, different European legal acts that recently entered into force or are being discussed implicitly require providers to know users’ ages or suggest age assessments as a measure to mitigate risks for minors online. At EFF, we consider these proposals akin to mandates because there is often no alternative method to comply except to introduce age verification.

Under the General Data Protection Regulation (GDPR), in practice, providers will often need to implement some form of age verification or age assurance (depending on the type of service and risks involved): Article 8 stipulates that the processing of personal data of children under the age of 16 requires parental consent. Thus, service providers are implicitly required to make reasonable efforts to assess users’ ages – although the law doesn’t specify what “reasonable efforts” entails.

Another example is the child safety article (Article 28) of the Digital Services Act (DSA), the EU’s recently adopted new legal framework for online platforms. It requires online platforms to take appropriate and proportionate measures to ensure a high level of safety, privacy and security of minors on their services. The article also prohibits targeting minors with personalized ads. The DSA acknowledges that there is an inherent tension between ensuring a minor’s privacy, and taking measures to protect minors specifically, but it’s presently unclear which measures providers must take to comply with these obligations. Recital 71 of the DSA states that service providers should not be incentivized to collect the age of their users, and Article 28(3) makes a point of not requiring service providers to collect and process additional data to assess whether a user is underage. The European Commission is currently working on guidelines for the implementation of Article 28 and may come up with criteria for what they believe would be effective and privacy-preserving age verification.

The DSA does explicitly name age verification as one measure the largest platforms – so called Very Large Online Platforms (VLOPs) that have more than 45 million monthly users in the EU – can choose to mitigate systemic risks related to their services. Those risks, while poorly defined, include negative impacts on the protection of minors and users’ physical and mental wellbeing. While this is also not an explicit obligation, the European Commission seems to expect adult content platforms to adopt age verification to comply with their risk mitigation obligations under the DSA.

Adding another layer of complexity, age verification is a major element of the dangerous European Commission proposal to fight child sexual abuse material through mandatory scanning of private and encrypted communication. While the negotiations of this bill have largely stalled, the Commission’s original proposal puts an obligation on app stores and interpersonal communication services (think messaging apps or email) to implement age verification. While the European Parliament has followed the advice of civil society organizations and experts and has rejected the notion of mandatory age verification in its position on the proposal, the Council, the institution representing member states, is still considering mandatory age verification.

Digital Identities and Age Verification

Leaving aside the various policy work streams that implicitly or explicitly consider whether age verification should be introduced across the EU, the European Commission seems to have decided on the how: Digital identities.

In 2024, the EU adopted the updated version of the so-called eIDAS Regulation, which sets out a legal framework for digital identities and authentication in Europe. Member States are now working on national identity wallets, with the goal of rolling out digital identities across the EU by 2026.

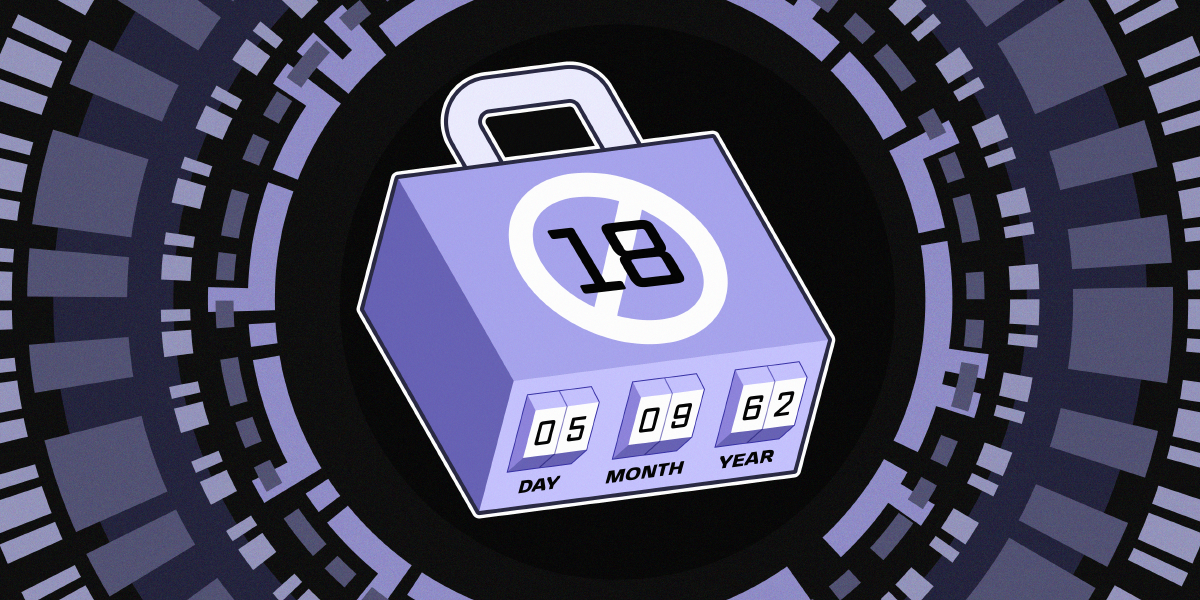

Despite the imminent roll out of digital identities in 2026, which could facilitate age verification, the European Commission clearly felt pressure to act sooner than that. That’s why, in the fall of 2024, the Commission published a tender for a “mini-ID wallet”, offering four million euros in exchange for the development of an “age verification solution” by the second quarter of 2025 to appease Member States anxious to introduce age verification today.

Favoring digital identities for age verification follows an overarching trend to push obligations to conduct age assessments continuously further down in the stack – from apps to app stores to operating service providers. Dealing with age verification at the app store, device, or operating system level is also a demand long made by providers of social media and dating apps seeking to avoid liability for insufficient age verification. Embedding age verification at the device level will make it more ubiquitous and harder to avoid. This is a dangerous direction; digital identity systems raise serious concerns about privacy and equity.

This approach will likely also lead to mission creep: While the Commission limits its tender to age verification for 18+ services (specifically adult content websites), it is made abundantly clear that once available, age verification could be extended to “allow age-appropriate access whatever the age-restriction (13 or over, 16 or over, 65 or over, under 18 etc)”. Extending age verification is even more likely when digital identity wallets don’t come in the shape of an app, but are baked into operating systems.

In the next post of this series, we will be taking a closer look at the age verification app the European Commission has been working on.